Episode 4: Image Analysis in Light-sheet Microscopy: Big Data Processing, Handling, and Storage

Episode 4: Navigating Big Data in Light-Sheet Fluorescence Microscopy: Image Analysis, Registration, and Event-Driven Acquisition

In Episode 4 of The Light-Sheet Chronicles Podcast, host Dr Elisabeth Kugler is joined by Dr Kate McDole (MRC LMB, Cambridge) and Dr Jan Roden (Bruker Luxendo) to dissect the computational, algorithmic, and workflow strategies necessary for biomedical image analysis of light-sheet data.

They address challenges in handling, processing, and analysing terabyte‑scale, multidimensional light‑sheet datasets with multiple channels, views, tiles, and time points. They also highlight how computational, algorithmic, and workflow strategies are essential for making these complex LSFM datasets usable for biomedical image analysis.

Listen to this episode or scroll down to read more about the topics discussed.

Navigating Big Data in Light-Sheet Fluorescence Microscopy:

Image Analysis, Registration, and Event-Driven Acquisition

Light-sheet fluorescence microscopy (LSFM) has revolutionised 3D biomedical imaging, enabling high-speed, low-phototoxicity observation of dynamic processes from subcellular structures to whole organisms. However, with this capability comes the challenge of handling, processing, and analysing terabyte-scale datasets.

In episode 4 of The Light-Sheet Chronicles, host Dr. Elisabeth Kugler is joined by two guests who bring different perspectives on light-sheet fluorescence microscopy:

- Dr. Kate McDole: Research Group Leader at MRC Laboratory of Molecular Biology in Cambridge.

- Dr. Jan Roden: Senior Software Architect at Bruker Luxendo, the light-sheet division of Bruker.

Together, they dissect the computational, algorithmic, and workflow strategies necessary for biomedical image analysis of light-sheet data.

TABLE OF CONTENTS:

- The Big Data Challenge in LSFM

- Registration: The Foundation for Meaningful Image Quantitative

- Viewing Data and Metadata Management

- Modular and Open Biomedical Image Analysis Pipelines

- Event-Driven Microscopy and AI in Image Analysis

- Handling Big Data in Microscopy

- The Future of LSFM Biomedical Image Analysis

- Conclusion

- References

The Big Data Challenge in LSFM

LSFM experiments often generate multidimensional datasets comprising multiple colour channels, views, tiles, and time points. Single acquisitions can easily reach tens to hundreds of gigabytes to terabytes. This is particularly the case for long-term in vivo studies such as timelapses of embryogenesis or organoid development.

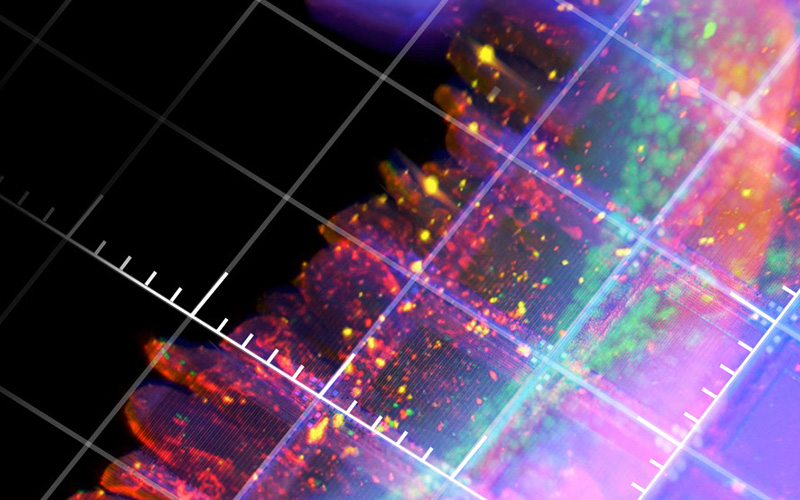

These datasets are inherently multidimensional: with hundreds of planes, several channels, many timepoints, tiles, and often multi-angle views. Thus, to make sense of the data, subsequent stitching and registration is required to produce coherent 3D reconstructions.

Our guest experts note: “The challenge isn’t just the size; it’s also the multiple layers of analysis that need to be considered. From single-pixel fluorescence to whole sample registration.”

Registration: The Foundation for Meaningful Image Quantification

At the heart of many LSFM analysis workflows is image registration. In this context, we refer to registration as the process of spatially aligning multiple 3D datasets, acquired from different views, different timepoints, or samples. Effective registration is often an essential step for downstream applications like deconvolution, segmentation, tracking, lineage tracing, and morphometrics.

Key strategies include:

- Rigid vs. Non-Rigid Transformations: Rigid registration corrects for global translations and rotations, while non-rigid (elastic or deformable) registration accounts for local shifts, such as caused by tissue deformation or optical aberrations 1.

- Feature-Based vs. Intensity-Based Approaches: Landmark-based alignment uses identifiable structures (e.g., brain regions or bones), whereas intensity-based methods optimise voxel-wise similarity metrics such as mutual information or cross-correlation 2.

- Iterative Refinement: To make alignment procedures more robust, often coarse-to-fine "zoom-in" approaches are used, where both the resolution of the image data and the number of degrees of freedom of the alignment transform are increased step by step.

- Subvolume Registration and GPU Acceleration: To use the compute hardware more efficiently, the full image volume is broken up into chunks (subvolumes) that are processed in parallel. This can accelerate the registration significantly, using GPUs, and also makes the memory usage manageable. (The interested reader can read more about GPU-accelerated image processing here: 3)

As discussed in this episode: “Even small misalignments at the subcellular level can propagate errors across segmentation and lineage analyses. Precision registration is non-negotiable if you want reproducible, quantitative results.”

Viewing Data and Metadata Management

Before processing, efficient 2D/3D data viewing is critical. Ideally, datasets could be processed into resolution pyramids, enabling interactive zooming and fast moving / rotating of the image volumes without loading full-resolution data into memory 4. Metadata should be comprehensive and standardised, ideally in OME-TIFF and NGFF and efficient file formats should be used, like Zarr and HDF5 5, 6, 7.

Dr. Jan Roden explains: “Good metadata is not optional; It underpins reproducibility, facilitates cross-lab data sharing, and allows AI algorithms to operate correctly on these massive datasets.”

Modular and Open Biomedical Image Analysis Pipelines

A recurring theme is the need for open, modular pipelines:

- Interoperability with Third-Party Software: Ideally, microscope software can seamlessly integrate with scripts from users, such as Python scripts, Fiji macros, MATLAB routines, or AI models.

- Automated, Event-Driven Processing: Oftentimes, copying and processing of data happens after the acquisition. Meaning some data might be useless (e.g. when the sample wasn’t viable) or take hours to copy. In a perfect world, pipelines would respond to freshly acquired data [8] and allow data processing during acquisition, enabling near real-time analysis.

- Scalability and Memory Management: Distributed computing frameworks or containerised environments (e.g., Docker, Singularity) help manage computational load while maintaining reproducibility.

Kate’s lab employs real-time maximum intensity projections during acquisition to monitor sample development and decide whether to continue or adjust imaging parameters. This is critical for multi-day embryogenesis studies to monitor samples even when no one is in the lab.

Event-Driven Microscopy and AI in Image Analysis

To handle dynamic biological processes, microscopes can leverage event-driven feedback 8, where software reacts autonomously to sample behaviour, such as:

- User-Defined Triggers: Predefined fluorescence intensity thresholds, morphology changes, or reporter expression events.

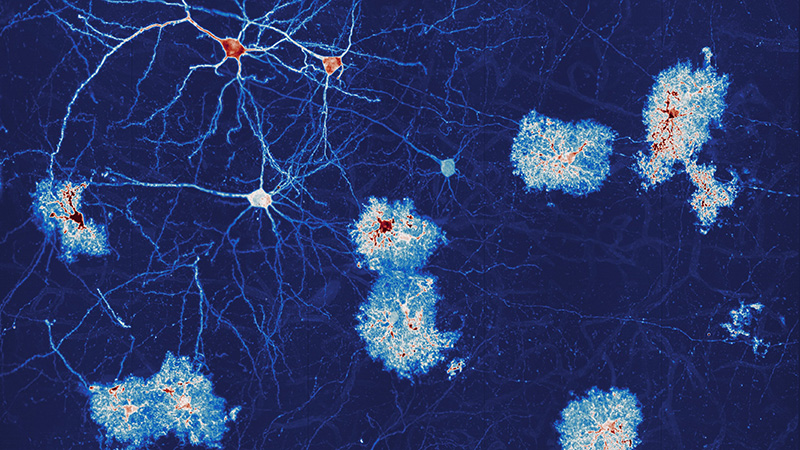

- Machine Learning Recognition: Neural networks, such as U-Net 9 or StarDist 10, detect target events in real time, triggering adaptive acquisition strategies such as faster frame rates or high-resolution zoom-ins to capture interesting moments.

Dr. Kate McDole explains: “Event-driven approaches let the microscope behave almost autonomously, which reduces human intervention and maximises sample throughput.”

Handling Big Data in Microscopy

Processing and storage require careful orchestration:

- Data Tiling and Stitching: Large samples exceeding a single FOV are imaged in tiles and later computationally fused 11.

- Reducing Data Size: Pre-processing removes empty data and artefacts, reducing dataset size and improving downstream analysis. For example, blood vessels data contain 3-15% of data, which makes data unnecessarily large and sometimes even contributes to algorithms being biased towards background 12, 13.

- Intermediate Storage: Data is moved from acquisition computers to networked storage or HPC clusters to avoid interfering with ongoing experiments.

GPU acceleration, multi-threaded pipelines, and subvolume processing are now standard practices for maintaining throughput in large-scale LSFM studies.

LEARN MORE ABOUT EFFECTIVE PROCESSING OF LIGHT-SHEET MICROSCOPY IMAGES:

The Future of LSFM Biomedical Image Analysis

Integration of real-time processing, event-driven feedback, modular pipelines, and AI represents the next frontier. Researchers can capture more biological information with higher temporal and spatial resolution while maintaining reproducibility and managing large datasets efficiently.

As discussed in this episode: “The goal isn’t just bigger datasets, it’s smarter acquisition and analysis. We want to extract maximum biological insight with minimal waste of time, computational resources, or samples.”

Conclusion

This episode of The Light-Sheet Chronicles demonstrates that biomedical image analysis has a lot in store for meaningful data acquisition, processing, and analysis.

Keynotes to take away:

- Image registration is the backbone of reproducible 3D reconstructions.

- Modular, open pipelines enable scalable, multi-terabyte data processing.

- Event-driven acquisition and AI integration empower researchers to capture complex biological phenomena in real-time.

- Metadata management and resolution pyramids are essential for performance, reproducibility, and cross-lab collaboration.

For researchers in developmental biology, organoid studies, or whole-organ imaging, these practices define future-facing, high-throughput, and quantitative light-sheet fluorescence microscopy.

References

- B. Khajone, R. Kokate, and V. Shandilya, “A Survey of Image Registration Techniques,” International Journal of Research in Information Technology, vol. 2, no. 4, pp. 554–560, 2014.

- B. Zitová and J. Flusser, “Image registration methods: a survey,” Image and Vision Computing, vol. 21, no. 11, pp. 977–1000, Oct. 2003, doi: 10.1016/S0262-8856(03)00137-9.

- R. Haase et al., “CLIJ: GPU-accelerated image processing for everyone,” Nat Methods, vol. 17, no. 1, pp. 5–6, Jan. 2020, doi: 10.1038/s41592-019-0650-1.

- E. Adelson, C. Anderson, J. Bergen, P. Burt, and J. Ogden, “Pyramid Methods in Image Processing,” RCA Eng., vol. 29, Nov. 1983.

- R. Massei et al., “Optimizing Image Data Management: A Workflow-Driven Approach for FAIR and Reusable High-Content Screening pipelines with OMERO,” May 06, 2025, Research Square. doi: 10.21203/rs.3.rs-6214250/v1.

- K. Miura and N. Sladoje, Eds., Bioimage Data Analysis Workflows. in Learning Materials in Biosciences. Cham: Springer International Publishing, 2020. doi: 10.1007/978-3-030-22386-1.

- J. Moore et al., “OME-NGFF: a next-generation file format for expanding bioimaging data-access strategies,” Nat Methods, vol. 18, no. 12, pp. 1496–1498, Dec. 2021, doi: 10.1038/s41592-021-01326-w.

- D. Mahecic, W. L. Stepp, C. Zhang, J. Griffié, M. Weigert, and S. Manley, “Event-driven acquisition for content-enriched microscopy,” Nat Methods, vol. 19, no. 10, pp. 1262–1267, Oct. 2022, doi: 10.1038/s41592-022-01589-x.

- O. Ronneberger, P. Fischer, and T. Brox, “U-Net: Convolutional Networks for Biomedical Image Segmentation,” in Medical Image Computing and Computer-Assisted Intervention – MICCAI 2015, N. Navab, J. Hornegger, W. M. Wells, and A. F. Frangi, Eds., in Lecture Notes in Computer Science. Cham: Springer International Publishing, 2015, pp. 234–241. doi: 10.1007/978-3-319-24574-4_28.

- M. Weigert and U. Schmidt, “Nuclei Instance Segmentation and Classification in Histopathology Images with Stardist,” in 2022 IEEE International Symposium on Biomedical Imaging Challenges (ISBIC), Mar. 2022, pp. 1–4. doi: 10.1109/ISBIC56247.2022.9854534.

- S. Preibisch, S. Saalfeld, and P. Tomancak, “Globally optimal stitching of tiled 3D microscopic image acquisitions,” Bioinformatics, vol. 25, no. 11, pp. 1463–1465, Jun. 2009, doi: 10.1093/bioinformatics/btp184.

- M. M. Rahman and D. N. Davis, “Addressing the Class Imbalance Problem in Medical Datasets,” IJMLC, pp. 224–228, 2013, doi: 10.7763/IJMLC.2013.V3.307.

- E. C. Kugler et al., “Zebrafish Vascular Quantification (ZVQ): a tool for quantification of three-dimensional zebrafish cerebrovascular architecture by automated image analysis,” Development, p. dev.199720, Jan. 2022, doi: 10.1242/dev.199720.